In boardrooms around the world, a familiar story unfolds. A company announces an ambitious AI initiative. The data science team builds a sophisticated model. Early results look promising. Leadership gets excited.

Then, somewhere between pilot and production, everything falls apart.

The model degrades. Predictions become unreliable. Business users lose confidence. And the initiative quietly joins the growing pile of abandoned AI projects that never delivered on their promise.

This article focuses on why AI projects fail, with a particular emphasis on the underlying causes that derail even the most promising initiatives. It is designed for business leaders, data scientists, and AI practitioners who are responsible for driving AI adoption and ensuring successful implementation. Understanding the high failure rates in AI projects is crucial not only to avoid wasted investments and missed opportunities but also to build a track record of successful, real-world AI deployments that can be showcased to employers and stakeholders.

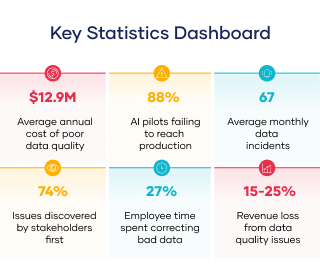

According to IDC research, 88% of AI pilot projects fail to reach production. ¹ That’s not a typo. Nearly nine out of every ten AI initiatives that get the green light never make it to the finish line.

The question is: why?

It’s Not What You Think

When AI projects fail, the usual suspects are blamed. The algorithm wasn’t sophisticated enough. The team lacked the right skills. The budget ran out. The use case wasn’t viable.

But when researchers dig into what actually kills AI initiatives, a different culprit emerges—one that has nothing to do with machine learning complexity or organizational readiness.

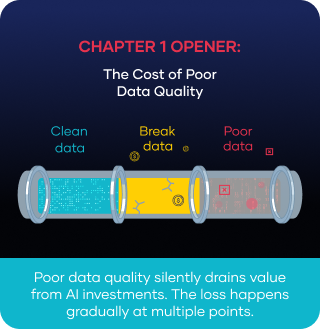

The Real Killer? Data Quality.

The same IDC research found that data quality issues are cited as the primary barrier to deploying AI production. Data governance practices are essential for ensuring that all data is consistent, trustworthy, and free from misuse. Maintaining data quality is also essential for regulatory compliance and for reducing the risk of fines. Not model accuracy. Not compute resources. Not executive buy-in. Data.

Data quality measures how well a data set meets criteria for accuracy, completeness, validity, consistency, uniqueness, timeliness, and fitness for purpose.

The Predictable Failure Pattern

What makes this particularly frustrating is how predictable the failure pattern is. Once you know what to look for, you can spot a doomed AI project from a mile away.

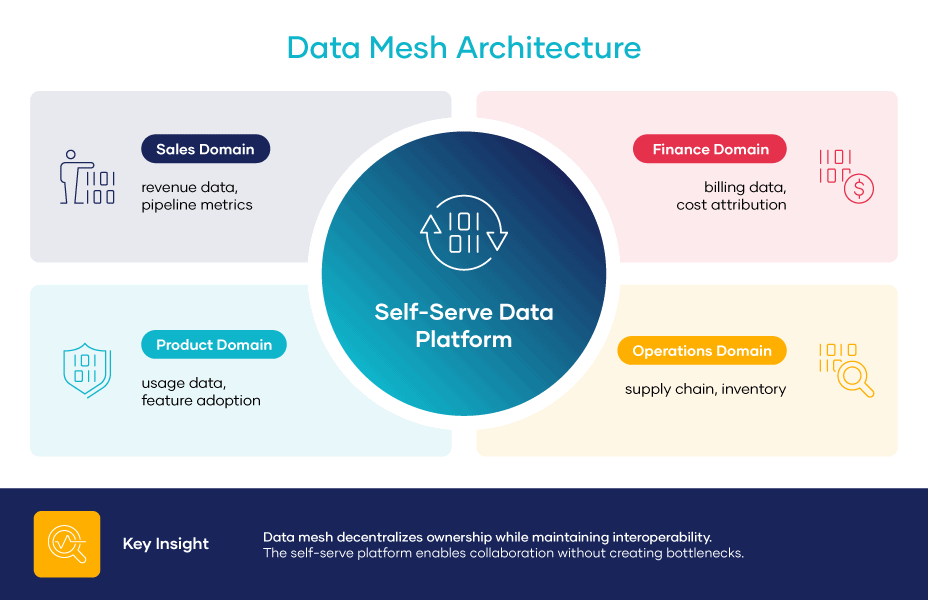

Data Management and Governance: The Overlooked Foundation

Behind every successful AI project, there’s a team that truly understands data management and governance. While it’s tempting to pursue the latest algorithms or cutting-edge machine learning techniques, the reality is that high-quality data is what truly drives smart decisions and business success, much like good insurance coverage protects you when life becomes unpredictable.

The Importance of Data Quality

Ensuring effective data management starts with regularly checking data quality. This means consistently reviewing your data for accuracy, completeness, and consistency, not just at the beginning of a project but throughout. Detecting data quality issues early can prevent problems that might harm your AI models and disrupt important business decisions.

Establishing solid data quality standards ensures your data remains reliable across your organization. These standards ensure that everyone, from your data engineers to your business analysts, is aligned on what constitutes good data quality. By managing your data effectively, you’ll reduce errors, obtain more trustworthy analytics, and feel confident in the insights that guide your strategy.

Industry-Specific Impacts

Poor data quality isn’t just a tech problem; it can seriously damage your business. In healthcare, for example, inconsistent or incorrect data can directly affect patient care. In insurance, it results in poor risk assessments and missed opportunities. No matter what your industry, poor data quality erodes trust, causes delays, and costs you money through costly mistakes.

Benefits of High-Quality Data

On the other hand, organizations that prioritize data quality experience clear advantages: fewer mistakes, more efficient operations, and improved business decisions. High-quality data gives your teams the confidence to act, knowing their analysis is based on solid, trustworthy data.

Ultimately, data management and governance are vital for your business’s success, not just the data team’s responsibility. Embedding data quality across your organization enables you to unlock its full potential, foster intelligent innovation, and achieve better results for your customers and stakeholders. It’s truly that simple.

Why This Keeps Happening

The Data Preparation Burden

The dirty secret of AI development is that data scientists spend remarkably little time on actual data science. Studies consistently show that data preparation consumes 60-80% of the time in any AI project. Master data management supports maintaining data consistency, accuracy, and integrity across organizations, which is critical for reliable AI outcomes. Data engineering is also essential for building and scaling data-driven solutions in AI projects. Additionally, establishing clear guidelines is crucial for overseeing data management processes and ensuring data accuracy and consistency. But preparation isn’t the same as ongoing quality assurance.

Here’s the fundamental problem: AI models are trained on historical data but run on live data. Live data is messy, unpredictable, and constantly changing.

Common Data Quality Pitfalls

Consider what can go wrong:

-

Schema changes: An upstream system adds a new field or renames a column. Your pipeline breaks.

-

Data drift: Customer behavior shifts. The patterns your model learned no longer apply.

-

Missing values: A vendor stops populating a critical field. Your model starts making predictions on incomplete data.

-

Freshness issues: Data that should refresh hourly starts lagging by days. Your ‘real-time’ predictions become stale.

-

Duplicate records: A sync issue creates phantom data. Your model’s confidence becomes misplaced

Any one of these issues can tank an AI model’s performance. In production environments, multiple issues often compound simultaneously.

The Six Dimensions That Determine AI Success

Not all data quality issues are created equal. For AI specifically, six data quality dimensions matter most.

Data quality dimensions are vital criteria for assessing the overall quality of datasets. A data quality assessment framework arranges these aspects, such as completeness, timeliness, validity, integrity, uniqueness, and consistency, to systematically analyze and evaluate data quality.

Table 1.1: Six Dimensions That Determine AI Success

| Dimension | The Question | What Happens When It Fails |

|---|---|---|

| Freshness | How recently was this data updated? | Models make decisions on outdated information. |

| Completeness | Are all expected values present? | Models impute missing values incorrectly. |

| Consistency | Do values align across sources? | Models receive conflicting signals. |

| Accuracy | Do values reflect reality? | Models learn from—and perpetuate—errors. |

| Schema Stability | Is the structure predictable? | Pipelines break without warning. |

| Lineage | Can data be traced to its source? | Debugging becomes impossible |

Data quality measures how well a dataset meets criteria for accuracy, completeness, validity, consistency, uniqueness, timeliness, and fitness for its intended use. Data refers to the reliability, trustworthiness, and accuracy of information within a system.

When these dimensions are healthy, AI models have the foundation they need to succeed. When they’re compromised, no amount of algorithmic sophistication can make up for it.

The 74% Problem

Here’s what makes this especially painful: in most organizations, data quality issues are discovered by the wrong people at the wrong time.

Research shows that 74% of data quality issues are initially identified by business stakeholders rather than the data teams responsible for the pipelines.2

Think about what that means. Your CFO opens a dashboard and sees numbers that don’t add up. Your sales team notices that lead scores have gone haywire. Your customer service reps realize the AI recommendations are way off. Maintaining high-quality customer data is critical for reducing costs and improving service, as it supports accurate delivery, effective marketing strategies, and better decision-making.

By the time these issues surface among business users, the damage is already done. Trust erodes. Confidence in the AI initiative collapses. And rebuilding that trust is infinitely more complex than maintaining it.

Data quality management tools help organizations maximize the use of their data and drive key benefits. High data quality helps streamline operations by simplifying workflows and reducing time spent on data management. Additionally, automating workflows with intelligent tools can enhance efficiency and decision-making across the organization.

The Path Forward

Modern Approaches to Data Quality

The good news is that this problem is solvable. The organizations that successfully deploy AI at scale have realized that data quality isn’t a one-time project; it’s an ongoing discipline. Big data presents unique challenges for data quality and the accuracy of AI applications, making continuous attention to data management essential.

They’ve made three fundamental shifts:

- From sampling to full coverage. Traditional data quality relied on statistical samples that might miss edge cases. Modern approaches monitor 100% of data flows.

- From batch to real-time. Issues that used to surface in nightly jobs are now detected as they occur before they propagate downstream. Real-time data enables instant information processing in AI-driven applications, supporting faster decision-making and more accurate insights.

- From manual rules to machine learning. Instead of human-defined thresholds that can’t adapt, ML algorithms learn what ‘normal’ looks like and automatically flag anomalies. Machine learning algorithms are now used to evaluate and ensure data quality in various datasets, improving reliability and trustworthiness.

Edge computing processes data nearer to its source, supporting high-performance and real-time applications, but also presents challenges in maintaining data accuracy and consistency at the edge. To address these challenges, it is crucial to regularly assess the effectiveness of data quality solutions and processes. Having a clear process and control mechanisms is vital for ongoing data quality management, ensuring data integrity and preventing errors throughout the data lifecycle.

Continuous Data Quality Management

Organizations that implement these approaches report 40% faster incident resolution compared to reactive methods. 3 But the real value isn’t faster fixes, it’s prevention. Catching issues before they cascade through your data ecosystem.

How Understanding Data Quality Drives AI Project Success

Understanding data quality in AI projects is essential for anyone looking to complete and showcase AI projects, improve their skillset, and demonstrate practical applications to employers. By mastering data quality management, business leaders, data scientists, and AI practitioners can ensure their projects reach production, deliver real business value, and stand out in a competitive job market. Building real-world AI projects not only enhances your portfolio but also sharpens your expertise as an AI engineer or data scientist, making you more attractive to prospective employers. Whether you’re working on foundational machine learning or advanced generative AI applications, a strong grasp of data quality principles is key to demonstrating your capabilities and achieving impactful results.

Key Takeaways

- AI doesn’t fail because of bad algorithms—it fails because of insufficient data. The 88% failure rate is a data-quality crisis, not a machine-learning crisis. High-quality data enables confident, informed decisions and is critical for effective decision-making in AI projects.

- The failure pattern is predictable. Enthusiasm → Reality → Firefighting → Abandonment. Knowing this pattern is the first step toward breaking it. Not having all the data necessary for accurate predictions can complicate AI projects and increase the risk of failure.

- Six dimensions determine AI success. Monitor freshness, completeness, consistency, accuracy, schema stability, and lineage. All six aspects are crucial. High data quality reduces costs by minimizing the expenses of fixing bad data and avoiding expensive errors and disruptions.

- Proactive beats reactive. When business users discover data problems before your team does, you’ve already lost the battle for trust. Ethical considerations in data quality are gaining importance, especially in AI and machine learning applications.

- Modern data observability changes the game. Full coverage, real-time detection, and ML-powered anomaly detection are no longer optional for serious AI initiatives. Enterprise intelligence leverages structured data and data quality initiatives to improve organization-wide decision-making, research, and automated processes.

Sources

¹ International Data Corporation. (2024). AI pilot-to-production research [Research report in partnership with Lenovo]. As cited in Nash, K. S. (2025, March 25). 88% of AI pilots fail to reach production. CIO. https://www.cio.com/article/3850763/88-of-ai-pilots-fail-to-reach-production-but-thats-not-all-on-it.html

² Redman, T. C. (2017). Seizing the opportunity in data quality. MIT Sloan Management Review. https://sloanreview.mit.edu/article/seizing-opportunity-in-data-quality/

³ (2024). 2024 data streaming report: Breaking down the barriers to business agility and innovation. https://www.confluent.io/resources/report/2024-data-streaming-report/