Let’s talk about a number that should keep every data leader up at night.

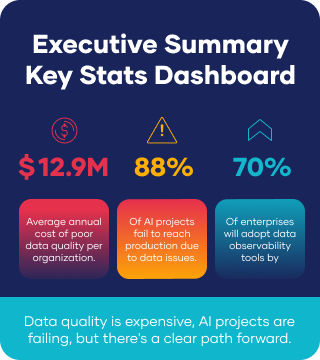

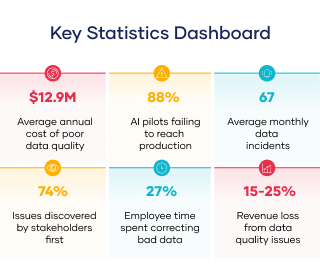

$12.9 million.

That’s the average annual cost of poor data quality per organization. ¹ Not the cost of a significant breach or a catastrophic system failure. Just the ongoing, everyday cost of data that isn’t quite right.

If that number seems high, you’re not alone. Most executives dramatically underestimate the cost of insufficient data to their organizations. The expenses hide in plain sight, buried in reprocessing costs, manual corrections, missed opportunities, and decisions made on faulty information.

This is the crisis no one talks about. Not because it’s unimportant, but because it’s so diffuse and pervasive that it’s become invisible. Like a slow leak in your basement, the damage accumulates quietly until one day you realize the foundation is compromised.

Where the Money Actually Goes

When we talk about ‘data quality costs,’ what are we actually referring to? The $12.9 million breaks down into several categories, each representing real dollars flowing out of your organization.

Reprocessing failed pipelines. Every time a data pipeline fails and needs to be re-run, you pay for compute twice. As data volumes increase, managing and monitoring data pipelines becomes more complex and costly, making failures even more expensive. Implementing automated data quality checks is essential to catch issues before pipelines fail, reducing unnecessary reprocessing and associated costs.

Manual correction efforts. When data quality issues slip through to production, someone has to fix them. Gartner estimates that employees spend an average of 27% of their time addressing data quality issues. ² That’s more than a quarter of your team’s capacity devoted to cleanup instead of creation. Introducing a data quality assessment framework provides a structured approach to systematically evaluate and address data quality issues across the organization, improving efficiency and reducing manual intervention.

Wasted compute on insufficient data. Processing bad data costs just as much as processing good data, but you don’t get any value from it. Every query run against stale data, every model trained on incomplete records, and every dashboard rendered with inaccurate metrics represent compute spend with zero return.

Zombie pipelines. Automated processes that keep running long after their business purpose has ended consume compute resources month after month, invisible to everyone except whoever’s paying the cloud bill.

The Hidden Costs of Poor Data Quality That Don’t Show Up in Budgets

The $12.9 million figure is conservative. The real damage often occurs in ways that never appear on a balance sheet.

Failed AI initiatives. 88% of AI pilot projects fail to reach production, primarily due to data quality issues. ³ Each failure represents lost investment and opportunity cost.

Eroded trust. When business stakeholders encounter inadequate data, such as conflicting reports or outdated dashboards, they lose trust in the data. High data quality fosters confidence in analytics tools and business intelligence dashboards, motivating business users to rely on them for decision-making rather than makeshift spreadsheets.

Delayed decisions. Questionable data leads to decision delays as teams verify and validate it, which can result in missed opportunities in fast-moving markets.

Regulatory exposure.

With regulations like DORA, the EU AI Act, and GDPR enforcement, poor data quality poses a compliance risk. Organizations must locate every record of an individual without missing any due to inaccurate or inconsistent data. Failing to show data lineage and accuracy can result in penalties.

The Revenue Impact

McKinsey research indicates organizations attribute 15-25% of revenue loss to data quality issues. ⁴ This occurs through:

- Customer churn driven by poor data—wrong recommendations, inaccurate billing, and poor personalization. Accurate and complete customer data is essential for effective communication and personalized interactions.

- Missed sales opportunities when lead scoring or customer insights are unreliable.

- Pricing errors that either leave money on the table or drive customers away.

- Inventory mismanagement leading to stockouts or excess carrying costs. Accurate supply chain data is critical for optimizing logistics.

- Marketing waste occurs when campaigns target the wrong audiences with the wrong messages.

Data Integrity and Security: The Unseen Risk

Data quality management isn’t something most organizations think about daily until it becomes necessary. Data integrity guarantees that information stays accurate and helps prevent unauthorized changes or file damage. Data security shields data from external threats like hackers and breaches that could damage your company’s reputation and finances.

Poor data quality opens doors to risks. Inaccurate or incomplete data makes it harder to detect unauthorized access, and inconsistent records create exploitable gaps. High-quality data is the foundation of trust for customers, regulators, and partners.

Building integrity and security into every data process—from entry to storage and analysis—protects against costly problems, ensures compliance, and maintains data as a trustworthy resource for decision-making and innovation.

Why This Problem Persists

If data quality costs so much, why isn’t it prioritized?

Costs are distributed. Expenses are spread across engineering hours, cloud compute, lost productivity, and business impact, obscuring the total.

Problems are normalized. Daily data issues become background noise. Teams develop workarounds and accept dysfunction as normal.

Prevention is invisible. Executives notice incidents that are fixed but rarely see incidents that are prevented.

Ownership is fragmented. Data quality issues cross organizational boundaries. Without clear ownership, accountability diffuses. Appointing data stewards is essential for managing data quality and ensuring accountability. Clear and standardized data definitions maintain quality, integrity, and reliability.

Establishing a data governance framework creates accountability and standard procedures for data handling across the organization.

67 Incidents Per Month

Research shows the average organization experiences 67 data incidents per month.⁵ That’s more than two incidents every day. Each incident causes investigation, fixes, communication, and downstream impact.

Table 2.1: 67 Data Incidents Per Month

| Metric | Value | Impact |

|---|---|---|

| Monthly incidents | 67 average | Constant firefighting mode |

| Investigation time | 2-4 hours per incident | 134-268 hours/month |

| Resolution time | Varies by severity | Additional resource drain |

| Downstream analysis | Often multi-team | Productivity multiplier effect |

At this pace, teams remain reactive, leaving little room for proactive data quality initiatives. New data types and changing regulations lead to more frequent and complex data quality issues.

Data Quality Metrics and Monitoring: Measuring What Matters

Reliable data is crucial for reports, decisions, and customer understanding. You can’t manage what you don’t measure. Data quality metrics are your roadmap, showing where problems exist and tracking progress.

Key measures such as accuracy, completeness, and consistency indicate whether the data supports business needs. Organizing metrics across areas helps pinpoint areas for improvement.

Automated monitoring tools keep watch 24/7, catching issues as they arise. This proactive approach prevents problems from snowballing, saving headaches and wasted resources.

Regularly tracking data quality metrics keeps information reliable, useful, and ready, enabling ongoing improvements and sustained business value.

Breaking the Cycle with Data Quality Management

Organizations that escape the data quality trap treat quality as infrastructure.

They measure what matters. Leading organizations rigorously track data quality metrics (freshness, completeness, consistency, accuracy). Assessing data quality involves multiple dimensions, including accuracy, completeness, consistency, validity, and timeliness. This process identifies areas for improvement and maintains high data quality.

They invest in observability. Modern data observability provides visibility into data health across the pipeline. Data quality tools diagnose and fix issues, including record matching, duplicate deletion, new data validation, and remediation policy establishment. Data profiling examines datasets for anomalies before analysis. Teams trace issues to root causes, not just symptoms.

They shift left. Instead of catching problems downstream, organizations validate data at ingestion. Implementing data quality rules and standards ensures data conformity. Schema changes and anomalies trigger alerts before pipeline breakage. Regular data cleaning is routine, not one-off.

They unify quality and cost. Poor data quality and inconsistent formats drive up cloud costs and risks. Cost optimization without quality context creates new issues. Continuous monitoring detects and addresses challenges. Maintaining and improving data quality are essential practices supporting data governance and master data management. Addressing missing values, duplicates, and poor data is critical for reliable analytics. Understanding the difference between quality and data integrity clarifies focus areas—quality emphasizes accuracy and fitness for purpose; integrity ensures trustworthiness and protection. High-quality data enables data-driven decisions. Data quality management is core to organizational data governance and vital to all data governance initiatives.

Data Quality Awareness and Culture: Making It Everyone’s Problem

Reliable data is essential for reports, customer records, sales data, and analytics. Building a culture where everyone cares about data quality shouldn’t be complicated.

Smart organizations offer practical methods suited for daily tasks and challenges, avoiding technical obstacles. From data-entry training to full-scale quality programs, they assist teams in understanding sound data practices.

Education enables people to identify and resolve issues, promoting a sense of ownership. Clear policies and governance structures ensure quality remains a priority throughout the data process.

Good data isn’t just about avoiding mistakes; it’s about peace of mind. Collaboration among data teams, business units, and executives promotes a unified approach to managing surprises such as inconsistent formats, missing information, or duplicates.

No one plans for data problems, but a strong culture keeps organizations prepared. With everyone on board, support increases from those who understand real-world challenges, helping build reliable, trustworthy information without wasting time or causing frustration. This practical approach ensures organizations move forward confidently every day.

Key Takeaways

- The cost is real and significant. The average is $12.9 million annually—your organization may pay more. Hidden costs include failed AI initiatives and eroded trust.

- The costs hide in plain sight. Reprocessing, manual corrections, wasted compute, and zombie pipelines drain resources without clear budget lines.

- 27% of employee time goes to cleanup. Over a quarter of team capacity is spent addressing data issues instead of creating value.

- 67 incidents per month is the norm. Teams remain reactive without time for prevention.

- Modern observability changes the equation. Full coverage, real-time detection, and unified quality-cost visibility turn data quality from an unseen burden into a manageable asset.

Sources

¹ Gartner, Inc. (2025, February 26). Lack of AI-ready data puts AI projects at risk [Press release]. https://www.gartner.com/en/newsroom/press-releases/2025-02-26-lack-of-ai-ready-data-puts-ai-projects-at-risk

² Gartner, Inc. (2025, March 5). Gartner identifies top trends in data and analytics for 2025 [Press release]. https://www.gartner.com/en/newsroom/press-releases/2025-03-05-gartner-identifies-top-trends-in-data-and-analytics-for-2025

³ Ryseff, J., De Bruhl, B. F., & Newberry, S. J. (2024). The root causes of failure in artificial intelligence projects and how to succeed. RAND Corporation. https://www.rand.org/pubs/research_reports/RRA2680-1.html

⁴ Experian. (2024, April). Data governance report 2024. Experian Data Quality. https://www.experian.co.uk/blogs/latest-thinking/data-quality/data-governance-report/

⁵ Gartner, Inc. (2024, October). Follow these five steps to make sure your data is AI-ready. https://www.gartner.com/en/documents/5733596